The start of every year brings a new influx of students. They all have certain things in common:

- They have not progressed in reading and/or writing enough to keep up with their peers.

- They are in need of support.

- They are in need of assessment.

- They have, at some point, been told that they have a reading level.

Many students come with reports on their cognitive abilities from psychologists. Many of them have speech and occupational therapy reports too.

We carefully read these reports before seeing the new students and then we go about gathering more information to help make decisions about supporting them. This information comes from the results of a battery of tests, including:

- Phonological awareness

- Single word reading (regular, irregular and non-words)

- Single word spelling

- Letter sound test (if necessary)

- Oral reading

- Listening comprehension

The more information we have about them, the faster and more efficiently we can provide support to them. Like any practitioner in any field, the use of any assessment determines the path we take to making reading and spelling faster/stronger/better.

The one thing we don’t ask them is:

“What’s your reading level?”

You might think that knowing their reading level would help to inform us. Heck, you might even wonder why we don’t use a test that comes up with a reading level. Surely this is valuable information?

Well, turns out it isn’t.

At Lifelong Literacy, our survival literally relies on our students’ success. There is no margin for error. People simply wouldn’t come back.

To that end, we have to be very precise about our students’ current abilities and their potential for growth. Their assigned ‘reading level’ is the one thing we can completely ignore. It is a blunt instrument, prone to subjectivity, bias and unacceptable variability. It tells us precisely nothing about the student in front of us.

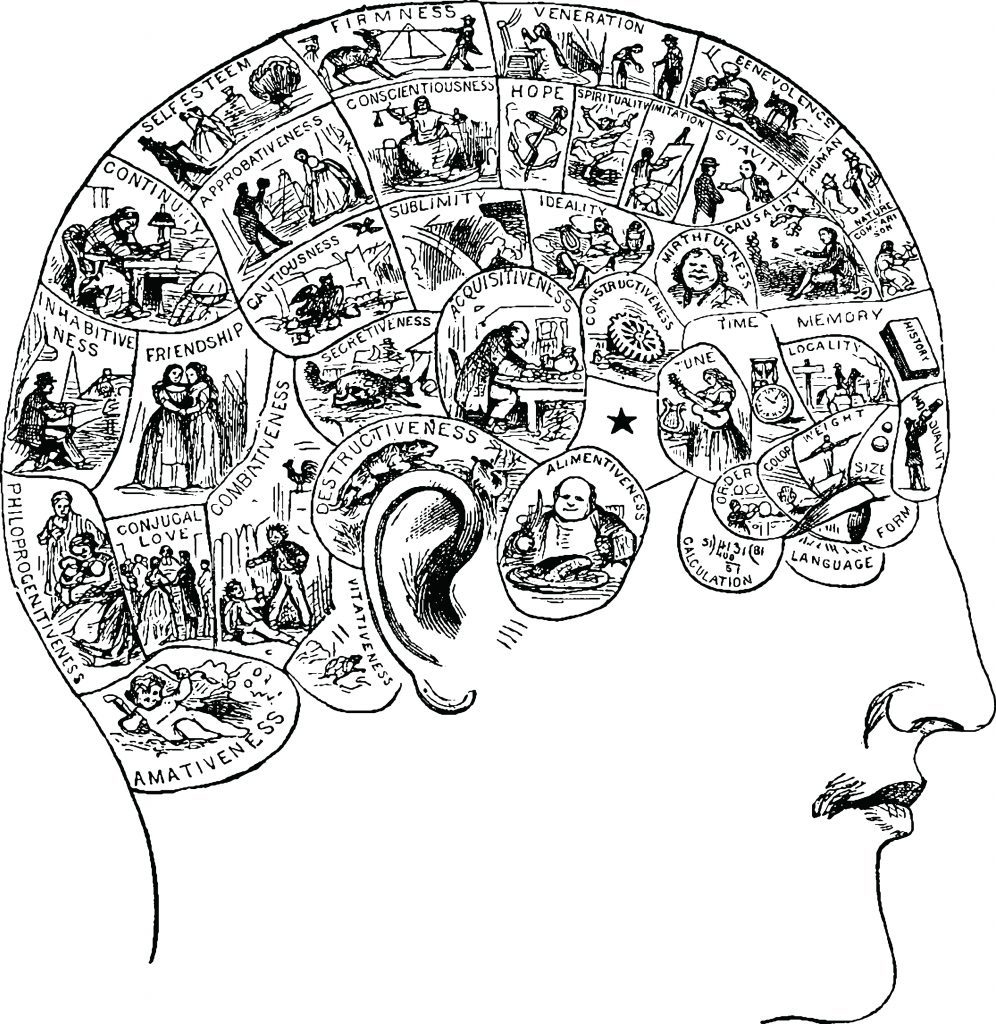

The same thing would happen if a person went to a doctor and had a ‘phrenology* level’. The doctor would run whatever tests were needed and would ignore the findings of any phrenological assessment done previously.

If a person consulted a horticulturist about a plant that wasn’t thriving, armed with a ‘vibration level’ for that plant, what do you think the consultant would do?

The possibilities for analogy are endless.

Book levelling, an extension of child-levelling, is a deeply entrenched yet woefully uninformative practice. In almost all cases, reading levels are derived using Running Records (also known as Benchmark Assessment Summaries and Observation Summaries). A reading level is then matched to a set of books whose level is also arbitrarily determined. Some children never move out of the very early levels, and as a result, are robbed of background knowledge and vocabulary development that their more mobile peers enjoy.

I’m a busy practitioner, running a practice that is, frankly, overflowing with students who aren’t making it at school. Efficiency and accuracy are critical features of my assessment. I have spent decades seeking, refining and updating the process of initial assessment so that students get the best possible help as quickly as we can provide it. If Running Records were fit for purpose, believe me, we’d use them.

Saying this publicly has caused quite an outpouring of disbelief, shock and anger in the teaching community. Making people uncomfortable about what they’re currently doing in education is an unfortunate, but necessary by-product of what I do. Beliefs and practices that don’t work well hit my students faster and harder. It is my duty to mention them, because if I don’t, who will?

So my message is: Running Records and the data derived from them are generally ignored by practitioners like me because they give no data of any value. It’s not personal; it’s practical.

Of course, there has been much support for my stance too. Teachers who answered a survey about being mandated to use Running Records were almost unanimous in their distaste for the tests. They reported feeling ignored, undervalued and sometimes even threatened when voicing their doubts. Many testified to the fact that no formal training in administering these tests took place. The survey also revealed that sometimes the data produced from the tests was uploaded to a server somewhere and promptly forgotten about. If we consider the significant amount of time Running Records take per student, this alone presents an unacceptable picture.

There have also been some rather chilling responses, from higher-up figures in various education departments, who have argued that Running Records are only useless if they’re done by incompetent teachers. Way to throw your trainees under the bus! How about arming teachers instead with valid, reliable tests that have minimal margin for error?

I have begun uploading YouTube videos to help suggest #RunningRecordsReplacements, since complaining about a problem is not as useful as offering a solution.

Please, let 2020 be the year we open up dialogue about placing valid tests into the hands of teachers.

*Phrenology: the detailed study of the shape and size of the cranium as a supposed indication of character and mental abilities

Pingback: Data-Driven Instruction: Simple, Compelling, and Wrong – Mr. G Mpls

How do you feel about your , reading?